She began with benign sites, including the official page of Prime Minister Narendra Modi’s ruling Bharatiya Janata Party and BBC News India. The experiment began to turn dark on February 11, as the test user started to explore content recommended by Facebook, including posts that were popular across the social network. When the video service Watch doesn’t know what a user wants, “it seems to recommend a bunch of softcore porn,” followed by a frowning emoticon.Īlso read: Big tech data centres spark worry over scarce Western water Facebook is a “pretty empty place” without friends, the researchers wrote, with only the company’s Watch and Live tabs suggesting things to look at. The new user test account was created on Februduring a research team’s trip to India, according to the report.

So we are improving enforcement and are committed to updating our policies as hate speech evolves online.” Research team’s trip to India Hate speech against marginalised groups, including Muslims, is on the rise globally. “As a result, we’ve reduced the amount of hate speech that people see by half this year. “We’ve invested significantly in technology to find hate speech in various languages, including Hindi and Bengali,” a Facebook spokeswoman said. Facebook has tended to outsource oversight for content on its platform to contractors from companies like Accenture.Īlso read: 86% of enterprises believe shift to remote working has led to increase in IoT security incidents: Survey The challenge is particularly acute in India, a country of 1.3 billion people with 22 official languages. Most of the money Facebook spends on content moderation is focused on English-language media in countries like the US.īut the company’s growth largely comes from countries like India, Indonesia and Brazil, where it has struggled to hire people with the language skills to impose even basic oversight. While Haugen’s disclosures have painted a damning picture of Facebook’s role in spreading harmful content in the US, the India experiment suggests that the company’s influence globally could be even worse. The experience was termed an “integrity nightmare,” by the author of the research note. The user only followed pages or groups recommended by Facebook or encountered through those recommendations.

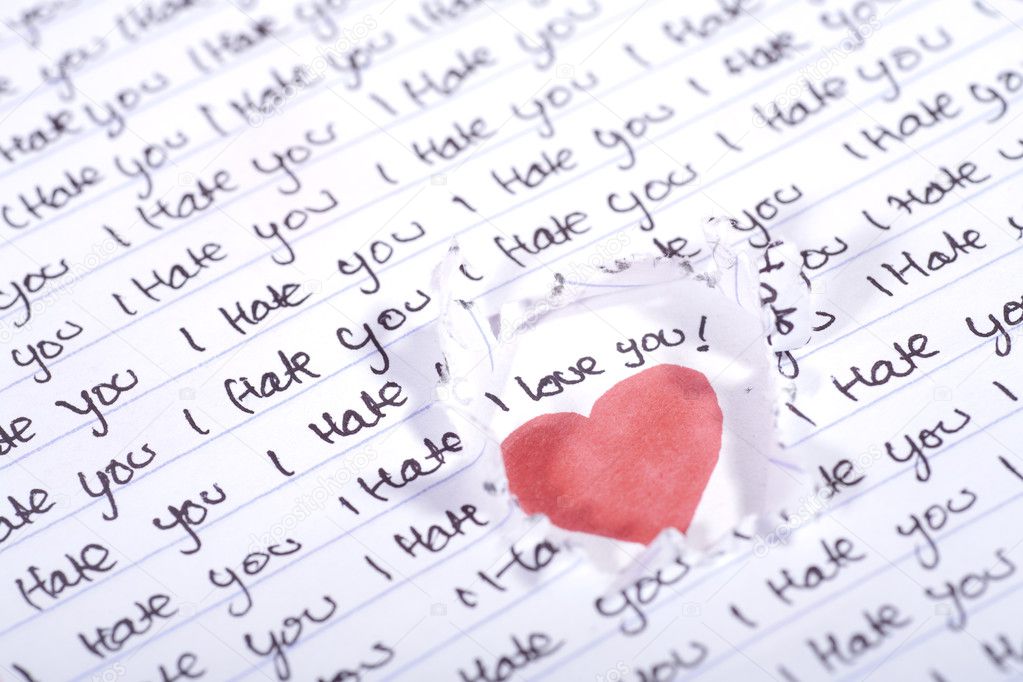

#I hate you photos trial#

The trial account used the profile of a 21-year-old woman living in the western India city of Jaipur and hailing from Hyderabad.

The test proved telling because it was designed to focus exclusively on Facebook’s role in recommending content.

“I’ve seen more images of dead people in the past 3 weeks than I’ve seen in my entire life total,” one staffer wrote, according to a 46-page research note that’s among the trove of documents released by Facebook whistleblower Frances Haugen. One group for “things that make you laugh” included fake news of 300 terrorists who died in a bombing in Pakistan.Īlso read: Social-media giants remove 111 m posts There were graphic photos of beheadings, doctored images of India air strikes against Pakistan and jingoistic scenes of violence. Within three weeks, the new user’s feed turned into a maelstrom of fake news and incendiary images. The results stunned the company’s own staff. In February 2019, Facebook Inc set up a test account in India to determine how its own algorithms affect what people see in one of its fastest growing and most important overseas markets.